Absurd Music Visualizations

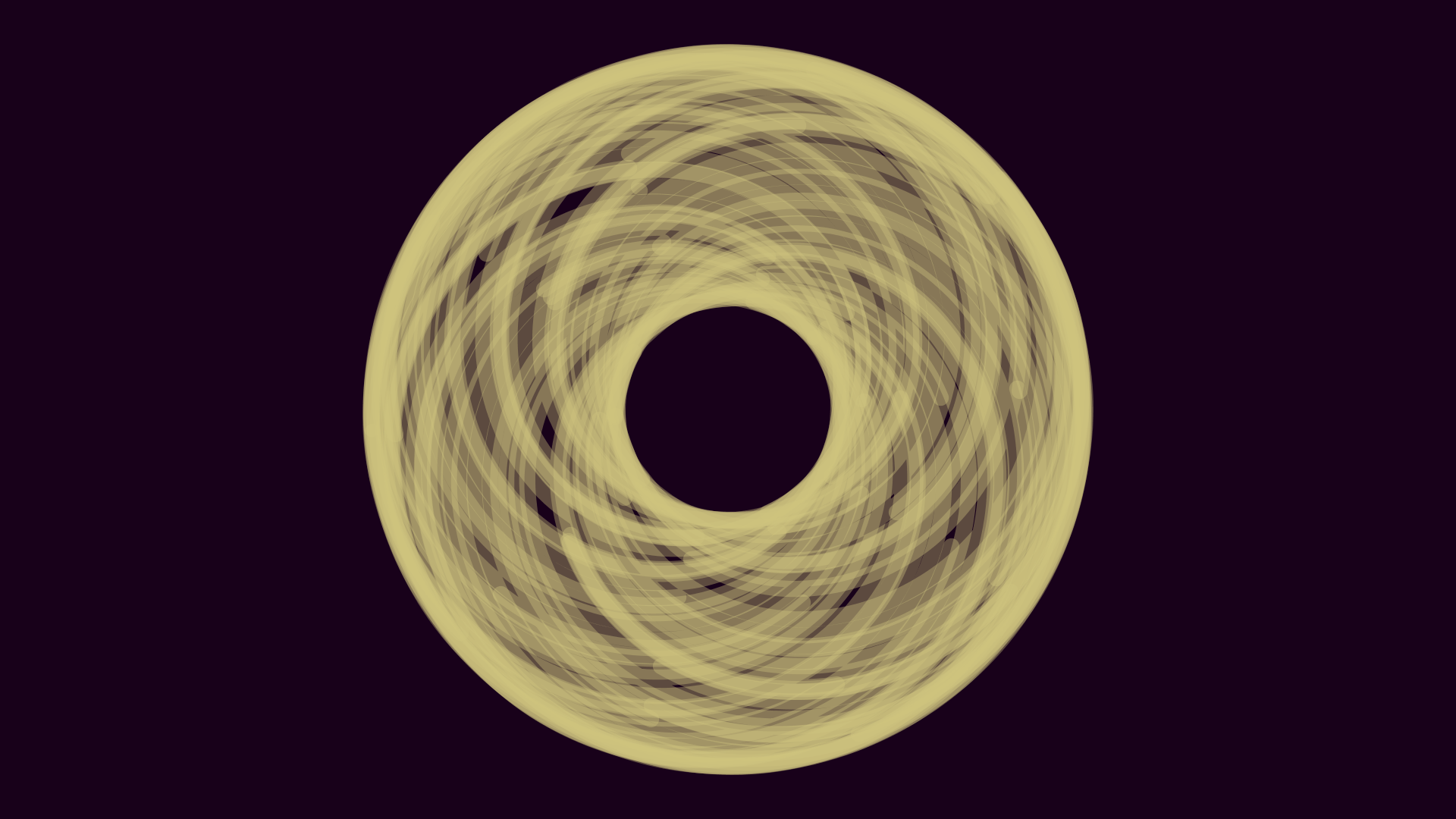

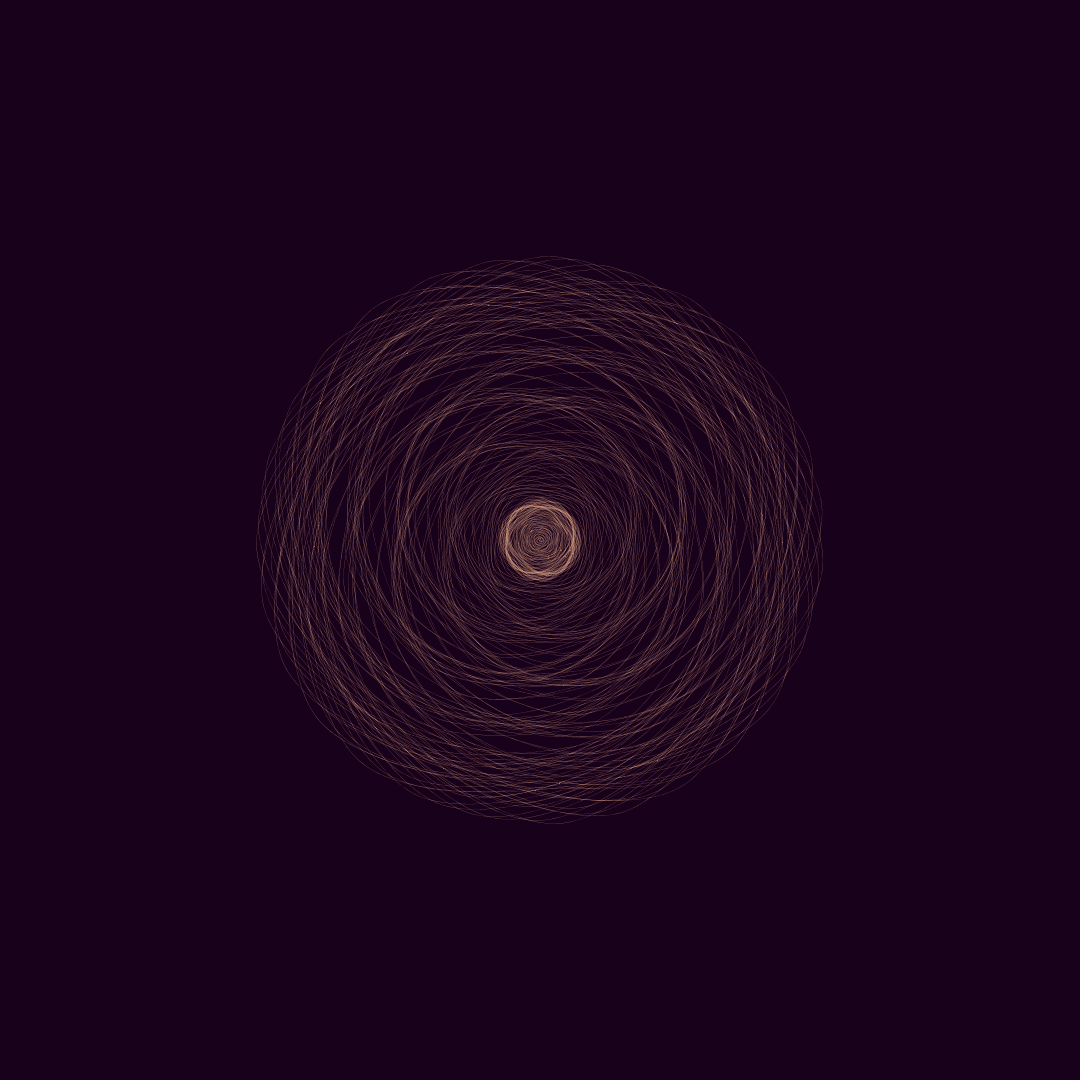

I’ve begun a series of audio reactive music visualizations. They look like this:

Each entry uses audio data to help inform procedurally generated visuals in different (and hopefully novel) ways. Most computational artwork relies heavily on elements of randomness, such as perlin noise. I find that sampling audio data is a nice form of structured randomness. I hope you enjoy my synesthesia playground.

If you’re interested in collaborating lemme know!

how to watch

some tools i’m using

- The Processing programming language (+minim for audio data).

- Three.js & GLSL along with CCapture.

- FFmpeg to stitch together frames and audio.

- Gifsicle for batch GIF resizing.

- Gramblr to upload videos to Instagram from my PC.

a few stills i’ve captured